Prologue

In this post, I will drive you through the mentality and ideas of designing good EJB APIs. It is kind of long, but I want you to understand the ideas behind every choice.

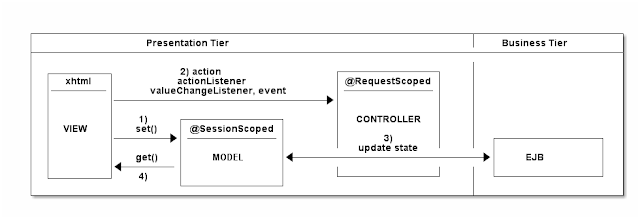

Java EE was designed based on the observation that most business applications are built on a

3-tier architecture:

- Data Access Layer (JPA, JDO, JDBC, proprietary NoSQL APIs)

- Business Logic Layer (EJB, CDI)

- Presentation Layer (Servlet, JSF, JSP, JAX-RS, JAX-WS)

Although the Java EE stack APIs are fairly well designed, they are also very complex, and have shallow learning curves. Not only each technology is hard to learn, one also has to understand how they cooperate.

I like the phrase Separation of concern. Good software architecture is born only when these three words are constantly in the mind of the architects and developers. The 3 tiers are exactly based on separation of concern of the program components. A good EJB design is based on whether or not you understand the concerns your application as to address.

Design your software architecture through use-cases

The advantage of using JPA, EJB and JSF only gets clear (and does not become a overwhelming) when you first sit down at your desk and start to think of the use-cases of your software. These are basically user interactions to your software. You must have a very clear definition of at least a subset of correlating use-cases for your software.

Example:

You have to create a software for a tennis club. The users are all going to be players, and twice every year there is a championship. The championship is among teams. A player can be a member for more teams. The teams are only permanent for one championship, they are reformed for every new championships. A player assigns herself for a team (or more). Of course there are many matches in a championship. It's the role of the administrator to create a championship, declare teams, create matches, and administer match results once they are played. The role of users are to see the matches, championship results, and to assign themselves for a team.

This is only a very simplified specification, but making it a software, takes much much consideration. You have to identify the exact use-cases such as:

- administrator creates championship

- administrator modifies championship attributes

- administrator creates a match (when? who will be the opponents? what are the minimum attributes for a match?)

- administrator modifies match attributes

- administrator assigns team players to matches as opponents

- replace players across teams

- ...

Why do you need to define them so explicitly? Because you have to understand and partition the problem domain. If you don't know the problem enough, you will not be able to separate what belongs to the

business logic layer and what is to the

presentation layer. And this is a key to create good EJB API.

Know well your problem domain and use-cases!

Atomic operations and consistency (EJB)

The brain of your software sits in your EJBs. One of the most important facilities of the EJBs for us is:

They are transactional by default (regarding RDBMS or messaging services). When you reach your database from an EJB via most probably JPA, you already have a transaction for the database session. As a consequence, every EJB operation is atomic toward the underlying database. Either every DB changes in the EJB takes place, or none.

You must map each use-cases you found to exactly one EJB method calls from the web gui! This is cruical to keep your data consistent.

Trivial Example:

The last use-case was to have a player, get her out from team A and put her into team B instead. A broken pseudo-code from the web layer may look like this.

@EJB

ChampionshipBean championshipBean;

public void transferPlayer(Player player, Team toTeam) {

Team currentTeam = championshipBean.getCurrentTeam(player); // 1.

championshipBean.deletePlayerFromTeam(player, currentTeam); // 2.

championshipBean.assignPlayerToTeam(player, toTeam); // 3.

refreshView();

}

One (among many) way for this code to go wrong is when after you deleted player from her team (2.),

toTeam is deleted by another administrator who is messing with the software concurrently, thus (3.) will throw an exception saying "

no such team as toTeam". The result is that

player gets deleted from her

currentTeam, but not assigned to another. When the user sees the error

"no such team as toTeam", he expects the player to be in the team as before the change, but she is gone. This is errornous behavior.

If you put the method

transferPlayer(Player player, Team toTeam) into an EJB method, after the same scenario, the DB will be in a consistent state, because either every change takes place or none, in an atomic operation.

Different call scenarios for the same use-case

Hanging with the pervious example, suppose you want to expose a REST interface to your application, or just create a different view for the player transfer option on your web gui. You need the same behavior initiated from totally other context. This is a convenient situation to see if you created a good EJB design or a crappy one. In the latter case, you will see, that you exposed too much business logic in your presentation layer: connected JPA entities together via setters; made changes to couple second old detached entities that come from SessionScoped JSF ManagedBeans; made more EJB calls that change your database for the same use-case.

When you encounter any of the above patterns, remember from the first section separation of concern. The use-case should be implemented once in the EJB, then it can be called from as many places as you whish.

A clean EJB API

JPA Entity parameters?

I was struggling a lot whether to pass entites to EJB method parameters, or pass only entity attributes.

@Stateless

public class ChampionshipBean {

public void assignPlayerToTeam(Player player, Team team) { .. }

// vs.

public void assignPlayerToTeam(Integer playerId, Integer teamId) { .. }

public void changeMatch(Match match) { .. }

// vs.

public Match setMatchPoints(Integer matchId, List<Integer> points) { .. }

}

Then I recalled that we had done in PHP was passing only database record primary keys to the forms in hidden input fields to identify those records after a form POST. And it's the same when we use JSF. The only difference is that the JSF framework hides this behavior, and we can keep whole entities in the memory in @SessionScoped ManagedBeans across requests. However, we often do not even need to keep whole entities or list of entities in the memory in the web layer, because we only want the primary key or we transform them into new POJOs that can be best used in a dataTable much easier. We still need the entitiy's primary key to identify the original identity for our operations though.

My conclusion was that:

In most cases it is totally enough to pass only the primary key of an entity back to the EJB and the parameters that has to be changed.

Because:

- often we don't need to keep whole entities in web layer (presentation layer)

- if we don't have the original entity in memory in web layer, a method call like assignPlayerToTeam(Player player, Team team) requires us to first call a method getPlayerForId(Integer playerId) then pass the returned Player to the preceding method. This is totally unnecessary and annoying

- If we pass whole entities to the EJB methods, they are detached, so every time they have to be attached again with entitiyManager.merge(player), this does not seem to be a lot trouble, but when you have complex business logic and make use of nested EJB calls, you will not want to unnecessarily merge your entities all the time just because you are not sure if the parameter was attached or detached.

- When you want to use your EJBs remotely because you deploy on a DAS cluster, you want to eliminate every unnecessary trafic overhead.

Unambiguous side effects of EJB methods

The previous reasoning was more of a technical one, but there is a semantical reason as well. When you pass an entity to an EJB method, you cannot make sure by heart that what is going to be persisted into the database. Get back to this example:

@Stateless

public class ChampionshipBean {

public void assignPlayerToTeam(Player player, Team team) {

player.setTeam(team);

List<Player> players = team.getPlayers();

players.add(player);

entityManager.merge(player);

entityManager.merge(team);

}

}

This seems reasonable right? Well, it's totally wrong! The developer on the presentation layer will not know what side effects are gonna take place besides that the two entities are going to have a relashionship. He might think, he can also changes the name of the player with player.setName("Bob"); before calling the method above, because the entity will be merged anyways, so the new name will be persisted sure.

But you, who implemented assignPlayerToTeam(Player player, Team team) might have been thoughtful, and made sure these kind of side effects cannot happen: the method does not change anything beside setting the relationship between the two entities. Clear out this ambiguous behavior, you will use only primary keys as parameters: assignPlayerToTeam(Integer playerId, Integer teamId). If you still want pass whole entities as arguments, be clear in the function javadoc, how the function behaves, end be coherent with similar functions!

Changing simple Entity properties as opposed to entity relationships

What does public void changeMatch(Match match) do? You can create method like this, but a better name would be changeMatchProperties(Match match). It suggests that the method allows to change simple properties such as match#startTime or match#place but does not allow to create or delete relationships between entities: match.setPlayerOne(player); will simply have no effect at all.

You have to create separate EJB methods for updating an entity's properties and for updating relationships between entities. Mainly because the relationship for JPA entities must be set on boths sides, then both have to be merged. A defensive solution for changeMatchProperties(Match match):

void changeMatchProperties(Match match) {

Match attachedMatch = entityManager.find(match.getId, Match.class);

attachedMatch.setStartTime(match.getStartTime());

attachedMatch.setPlace(match.getPlace());

// set other properties

entityManager.merge(attachedMatch);

}

Some rules of thumb

- the name must be clear about what exactly the method does

- follow consistent naming conventions in your code to differentiate: CRUD operations, entity relationship handling and more complex functions.

- if entities are passed as parameters, only allow the changes that are suggested in the method name, no unexpected side effects. Prohibit entity relationship targeted changes in changeEntityProperties named methods.

- create methods for entity relationship handling that does not allow changing any other entity attributes, only attaching entities

- pass only primary keys as much as possible in favor of passing entities

- never ever try to pass back information from EJB by the use of references in the arguments! When you ever want to call your methods remotely, you will surprised

Don't create entity facades for CRUD operations as EJB

See

http://weblogs.java.net/blog/felipegaucho/archive/2009/04/a_generic_crud.html point 5. This is a good pattern as a

Data access layer to use in your EJBs! However, think about in a decent project you could have many tens of entities. Do you really want to create 10-20 EJBs just for CRUD operations which will be only a small part of your complex use-cases? When you

modify match attributes or

set points for matches, you must enforce constraints in your EJB as I described in

Unambiguous side effects of EJB methods. Simple

create, read, update, delete operations of single entities will be rare, because you usually don't just delete a team, you have to deal with it's matches you already created. So think before you start factoring these kind of facades for yourself, and see if your really need all of them or only a subset. Don't create unnecessary methods in your EJB API. They cause confusion.

Accordingly, you don't create EJBs for each of your entities! You create EJBs for groups of your problem domain: managing matches, managing users, managing championships

Designate one EJB called e.g. RepositoryBean to read-only operations. Very unlikely that on a webpage you only need to present one entity. You usually have te request several entities to present data on your page. Think about if it's easier to inject only one EJB into the presentation layer or a bunch of them?

How does

"grouping the problems into different EJBs" and

"designating one super EJB" not contradict? It can... When you have to use queries that is very specific to one set of the problems e.g.

findAllMatchesOfOpponentTeams(Integer teamAPk, Integer teamBPk); you may put it into the ChampionshipManagedBean. Because they are logically cohesive. But for single entity or list of entity requests you will be glad not to inject 2-3-4 EJBs into your presentation layer just to present one page. On the other hand, when updating your database, it's easier to find the right EJB method within a single, problem specific class.

When you have to decide which method goes into which EJB, think about the cohesion of your methods. Ask yourself: "Am I going to use these methods together one after another? Are these methods address a single problem group, so I better find them in one place?"

http://en.wikipedia.org/wiki/Cohesion_(computer_science)#Types_of_cohesion

Public and private EJB API

The preceding examples were very simple CRUD operations. But what to do when it comes to more complex use-cases? You can create reusable EJB methods that can be called from other EJB methods. It would also be a good practice to put different EJBs for public or private use into different packages.(Unfortunatelly it's not allowed to declare EJBs as package private, neither EJB methods). That way you can make reusable EJB methods that are intended to be used from other EJB methods, but not from client code (presentation layer). For example you are allowed to pass whole entities to nested EJB method calls instead of only the primary keys, and you don't have to worry about merging the entities to the entity manager within those called methods. But it has to be well documented and clear which EJBs should be used by the client.

Please share your thoughts! :)